So how do we achieve loading large volumes of data into the Redshift cluster. It means if you are having 100 million rows, there would be 100 million queries running on your Redshift cluster. Redshift executes each query one by one and loading of the data is completed once all the queries are executed. Now when i am sending across multiple rows (in this case 10), each row of data is treated a separate query. It executes each query on its cluster and then proceeds to the next stage. The main reason which i considered is that Redshift treats each and every hit to the cluster as one single query. Ideally it shouldn’t take more than 1-2 seconds. 2 minutes to load the data into redshift cluster. You will find that even for loading 10 rows of data, it takes approx.

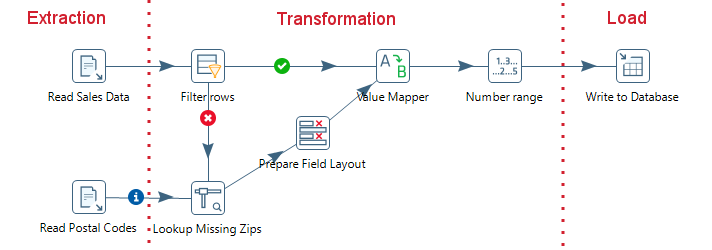

The idea here is to connect any source and try loading the data to a Redshift cluster in a traditional kettle way. We can take a simple Pentaho DI Table Input and a Table Output step as below: A sample kettle file loading data to Redshift

Now we can try to loading the data using PDI. Assuming you have successfully built up your Amazon Redshift cluster and used Pentaho to connect to the cluster.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed